*As an Amazon Associate we may earn from qualifying purchases when you buy through links on our site.

Using a TV as a computer monitor can be a viable solution for those often hunched over their laptop and hurting their neck.

Maybe you’ve built a new PC and want to save some money on the monitor. Or perhaps you want a larger or a second screen to make multitasking easier.

Regardless, repurposing an old TV that’s collecting dust as a computer monitor is a great way to save some money.

But how do you use a TV as a computer monitor?

I’ll guide you through the process step by step below.

How to Use TV as a Computer Monitor

Step 1: Figure Out Which Cable You Need

Check the Inputs on Your TV

The first thing is to check which input your TV supports. Most modern TVs have HDMI input ports; however, there’s a small chance that your TV has a DVI input port.

Some TVs have VGA inputs that are designed for PC use. If your TV has this port, it will also have a PC mode “source” setting.

If you connect your PC to your TV via a VGA cable, the PC source setting is where the output will display.

It’s important to keep in mind that VGA only supports video, and if you want your computer’s audio to route to the TV when using VGA, you’ll need to connect the PC and the TV using an auxiliary cable.

VGA is an older technology, and using it can be a little inconvenient. So, if your TV has both VGA and HDMI ports, use the HDMI port.

Check the Outputs of Your Computer

Next, you must figure out which output port your computer has.

If you’re using a newer laptop, you may need to buy an adapter that converts your computer’s output to an HDMI signal. Some laptop manufacturers supply an HDMI port on the laptop, which makes things a lot easier.

If you’re using a PC, check to see if your graphics card has an HDMI port. You’ll likely find a mini-HDMI port on your graphics card.

However, if you have an older graphics card, it may only have a DVI port.

If your computer doesn’t have a graphics card, check your motherboard. Most motherboards have an HDMI output; however, if you have an older PC, you will find that your motherboard has a VGA port.

In the best-case scenario, you’ll only need to buy an HDMI cable to connect your computer to your TV and use it as a monitor.

But if your PC only has a DVI port or VGA port, there are cheap DVI-to-HDMI and VGA-to-HDMI converters that you can use.

Connect one end of the HDMI cable to the adapter, and plug it into the computer. If you’re not using an adapter, connect one end of the HDMI cable directly to the computer.

Plug the other end of the HDMI cable to the TV.

With this, most of the legwork is done—all you need to do next is configure your TV and computer to work correctly.

Step 2: Switch To The Right Input And Output

This is the easiest step. Grab your TV’s remote, and find the “Source” button. When you press it, a menu will pop up, showing you the available ports.

If you’ve connected your computer to the TV via HDMI as I recommended above, navigate to the corresponding HDMI source.

If you don’t know which HDMI port you connected the cable to, don’t worry. You can either go back and check or go the trial and error route and navigate to every HDMI source till you find it.

It doesn’t take as long as you would think.

If you’re using a laptop, you may need to visit your computer’s display settings and configure it to use the TV screen as an output.

If you’re using a Windows laptop, open the Start menu, and navigate to Settings > System > Display and see that your computer is using the TV as a display.

If you’re on a Mac, visit the System Preferences menu via the Apple menu and find the “Displays” section. You will know if the computer is using the connection correctly here.

However, most laptops start to output the image automatically. You most likely won’t need to configure anything.

The computer’s output should pop up on the screen as you browse the different sources on your TV.

But chances are, the output goes beyond the bounds of the screen or has the wrong resolution.

Step 3: Tweak The Resolution and Display Area

If you have a Windows desktop, go to the Start menu, navigate to Settings > System > Display, and ensure that the display resolution is 1920 x 1080.

If your TV supports a higher resolution (4K), select 4096 x 2160 or 3840 x 2160, whichever is available.

There’s a chance that your computer’s output still exceeds the TV’s bounds, giving you an awkwardly large image.

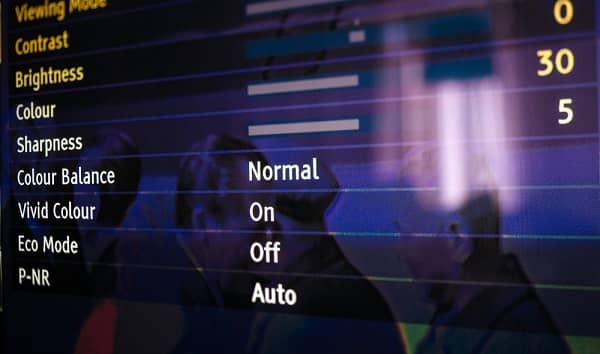

You can fix it by navigating to the settings menu on your TV and finding the “Display Area” option.

If the “Auto Display Area” option is on, turn it off, and change the display area to “+1.” This will make the output fit the screen perfectly.

And that’s it: your TV is now your computer’s monitor!

However, in certain circumstances, you’re better off using a monitor instead of a TV. I discuss more about this in the frequently asked questions section.

Frequently Asked Questions

Can You Use a Computer Monitor as a TV?

You could use your computer monitor as your TV; however, most monitors don’t have speakers on them and tend to be smaller.

You won’t enjoy a movie or a show on a smaller 20”-30” monitor as much, and you will need to spend a good amount of money buying a sound system.

As well, computer monitors don’t come with TV tuners so you’ll need to buy an external ATSC-compliant tuner if you want to watch live TV.

While it can be done, it’s not a good idea.

Why is a Monitor Better Than a TV for Computers?

This is mainly because TVs consume significantly more power than monitors do. Keeping a TV on will produce a bigger electricity bill than you would if you were to use a monitor instead.

Also, if you have a 60-inch large screen TV on your desk, it’s more likely to strain your neck and give you headaches than make multitasking easier.

Better color accuracy (generally speaking) and higher refresh rates are also two other reasons why monitors are better than TVs for using computers.

Why is a Monitor Better Than a TV for Computer Gaming?

Monitors tend to have higher refresh rates (144Hz and above), which gives competitive gamers a significant advantage. Most modern TVs display 60 frames per second—meaning they have a refresh rate of 60Hz, which isn’t “bad,” but just not suited for gaming.

If you’re playing regular single-player games, though, it won’t make much of a difference.

Why is a Monitor Better Than a TV for Photo and Video Editing?

TVs are designed to please the eyes, which means the colors aren’t always accurate. On the other hand, monitors come designed for high color accuracy, ensuring that the photos and videos you edit don’t have a weird color on different displays.